SQL - Cafe Sales and Data Cleaning☕

Project Overview

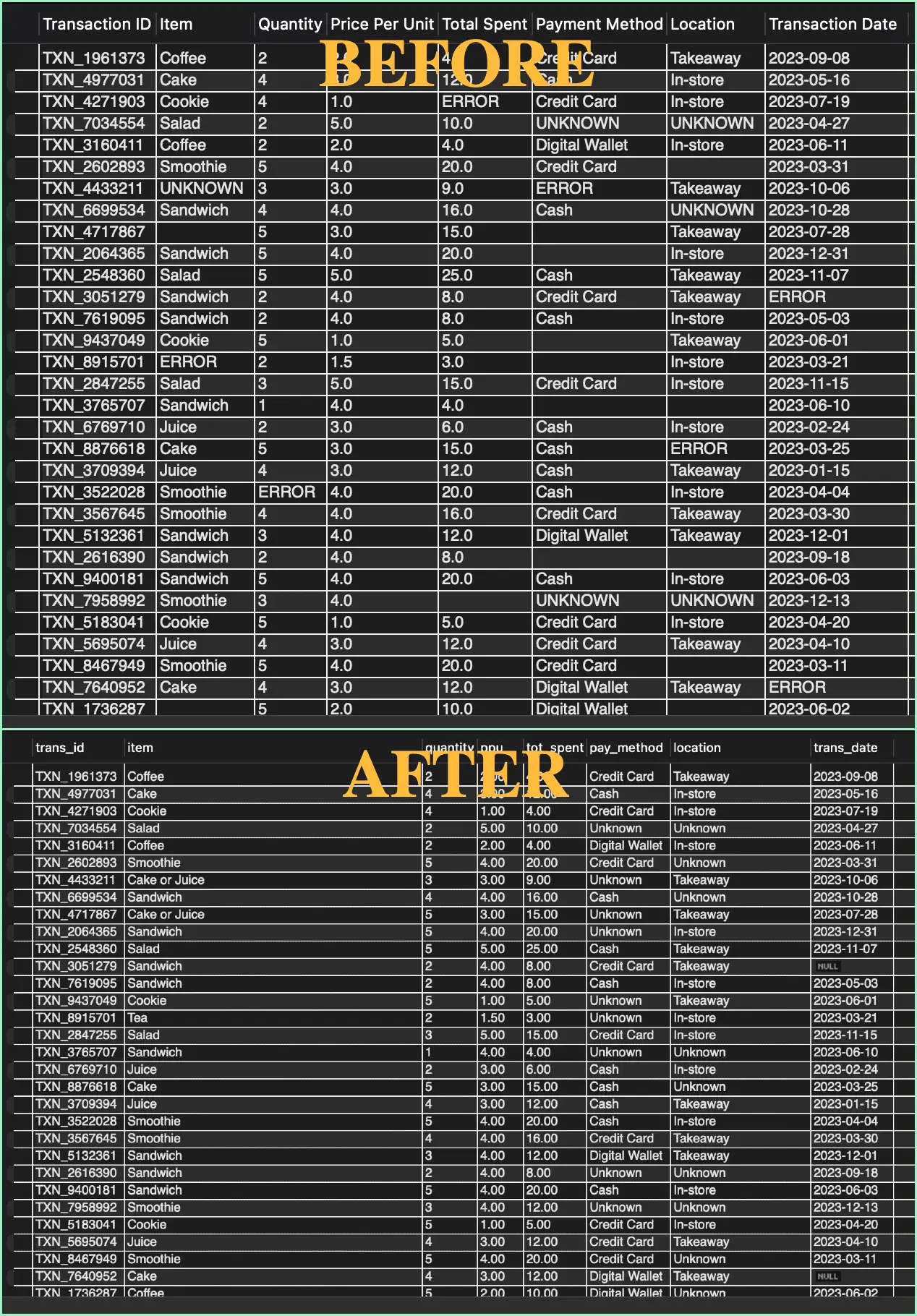

Situation: A raw dataset of 10,000 cafe transactions

was ingested with significant quality issues,

including missing values, incorrect data types (text in numeric fields), and generic "ERROR" strings.

This rendered the data unusable for revenue reporting.

Technologies Used:

- SQL

- MySQL

My steps:

- 1. Staging: Created a secure staging environment to prevent data loss.

- 2. Deduplication: Used window functions to identify and remove duplicate transaction entries.

- 3. Type Enforcement: Cleaned text errors from numeric columns (REGEXP) and enforced strict INT and DECIMAL data types.

- 4. Logical Imputation: Instead of deleting rows with missing items, I wrote logic to infer the item name based on its price.

- 5. Revenue Recovery: Recalculated missing Total Spent values using the formulas, saving 460+ rows from being discarded.

- 6. Archiving: Segregated completely unfixable data into a garbage_table to maintain a clean "Production" environment without losing audit trails.

Results:

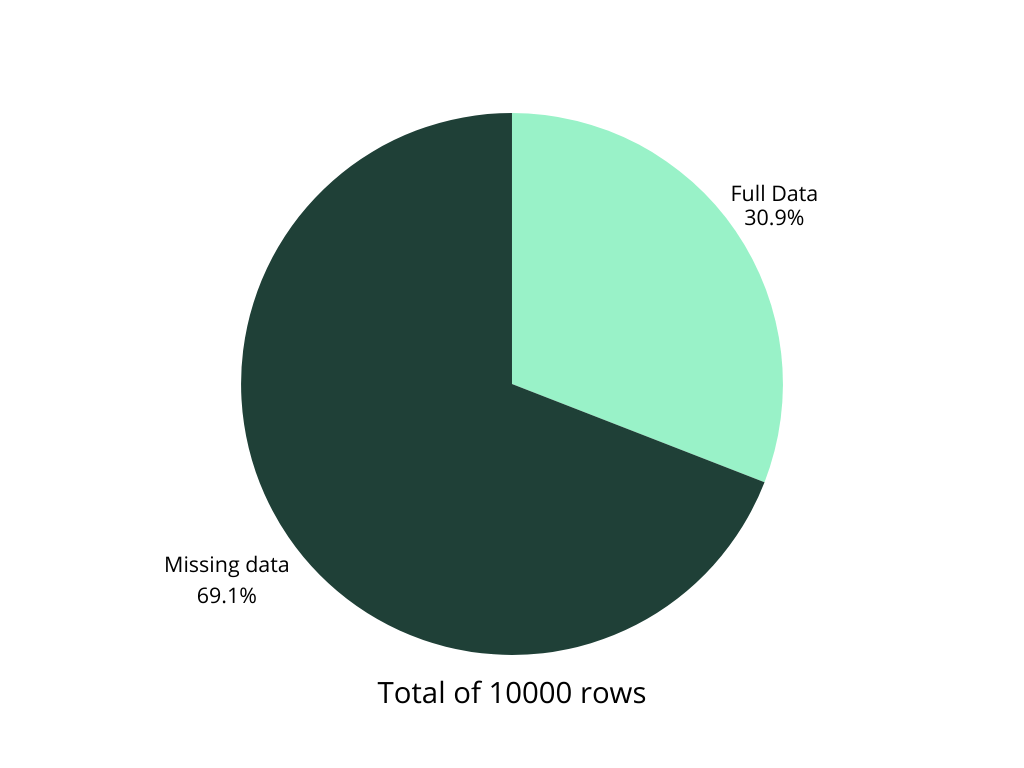

- Before: 69% of data was unusable or mathematically incorrect.

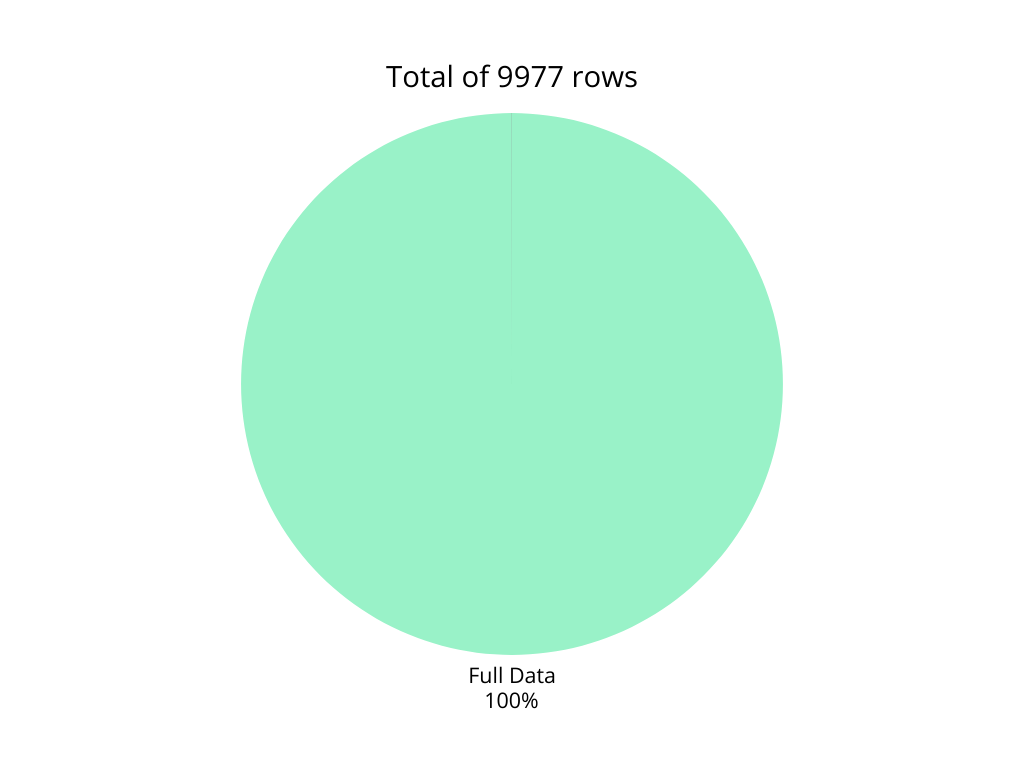

- After: 99.7% Data Usability: Successfully recovered nearly all transaction records.

- Structural Integrity: Converted the table from a flat text file into a strictly typed database (INT, DECIMAL, DATE).

- Analysis-Ready: The data is now optimized for BI tools, with 0 math discrepancies and standardized categorical labels.